AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

A Quick Refresher, Cont

Having established what’s bad about VLIW as a compute architecture, let’s discuss what makes a good compute architecture. The most fundamental aspect of compute is that developers want stable and predictable performance, something that VLIW didn’t lend itself to because it was dependency limited. Architectures that can’t work around dependencies will see their performance vary due to those dependencies. Consequently, if you want an architecture with stable performance that’s going to be good for compute workloads then you want an architecture that isn’t impacted by dependencies.

Ultimately dependencies and ILP go hand-in-hand. If you can extract ILP from a workload, then your architecture is by definition bursty. An architecture that can’t extract ILP may not be able to achieve the same level of peak performance, but it will not burst and hence it will be more consistent. This is the guiding principle behind NVIDIA’s Fermi architecture; GF100/GF110 have no ability to extract ILP, and developers love it for that reason.

So with those design goals in mind, let’s talk GCN.

VLIW is a traditional and well proven design for parallel processing. But it is not the only traditional and well proven design for parallel processing. For GCN AMD will be replacing VLIW with what’s fundamentally a Single Instruction Multiple Data (SIMD) vector architecture (note: technically VLIW is a subset of SIMD, but for the purposes of this refresher we’re considering them to be different).

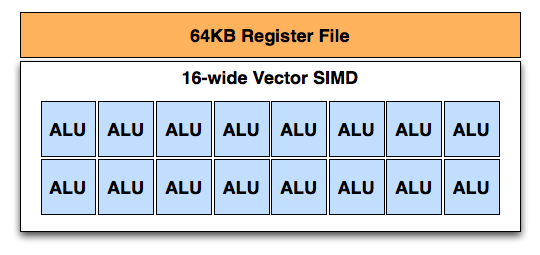

A Single GCN SIMD

At the most fundamental level AMD is still using simple ALUs, just like Cayman before it. In GCN these ALUs are organized into a single SIMD unit, the smallest unit of work for GCN. A SIMD is composed of 16 of these ALUs, along with a 64KB register file for the SIMDs to keep data in.

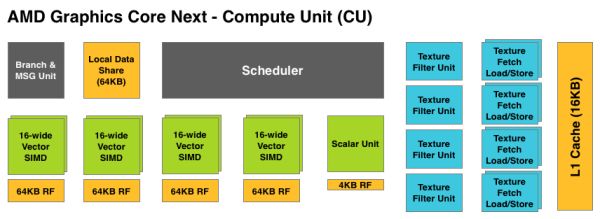

Above the individual SIMD we have a Compute Unit, the smallest fully independent functional unit. A CU is composed of 4 SIMD units, a hardware scheduler, a branch unit, L1 cache, a local date share, 4 texture units (each with 4 texture fetch load/store units), and a special scalar unit. The scalar unit is responsible for all of the arithmetic operations the simple ALUs can’t do or won’t do efficiently, such as conditional statements (if/then) and transcendental operations.

Because the smallest unit of work is the SIMD and a CU has 4 SIMDs, a CU works on 4 different wavefronts at once. As wavefronts are still 64 operations wide, each cycle a SIMD will complete ¼ of the operations on their respective wavefront, and after 4 cycles the current instruction for the active wavefront is completed.

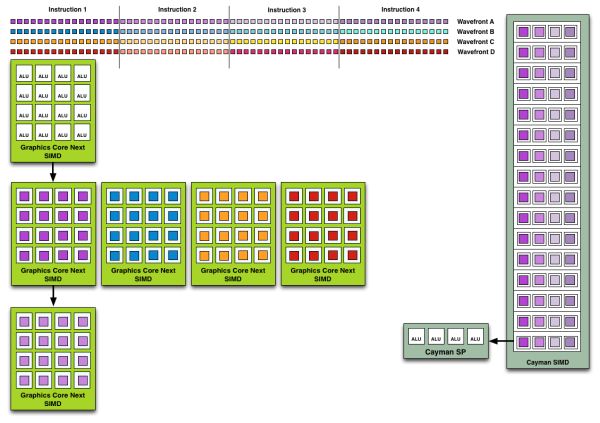

Cayman by comparison would attempt to execute multiple instructions from the same wavefront in parallel, rather than executing a single instruction from multiple wavefronts. This is where Cayman got bursty – if the instructions were in any way dependent, Cayman would have to let some of its ALUs go idle. GCN on the other hand does not face this issue, because each SIMD handles single instructions from different wavefronts they are in no way attempting to take advantage of ILP, and their performance will be very consistent.

Wavefront Execution Example: SIMD vs. VLIW. Not To Scale - Wavefront Size 16

There are other aspects of GCN that influence its performance – the scalar unit plays a huge part – but in comparison to Cayman, this is the single biggest difference. By not taking advantage of ILP, but instead taking advantage of Thread Level Parallism (TLP) in the form of executing more wavefronts at once, GCN will be able to deliver high compute performance and to do so consistently.

Bringing this all together, to make a complete GPU a number of these GCN CUs will be combined with the rest of the parts we’re accustomed to seeing on a GPU. A frontend is responsible for feeding the GPU, as it contains both the command processors (ACEs) responsible for feeding the CUs and the geometry engines responsible for geometry setup. Meanwhile coming after the CUs will be the ROPs that handle the actual render operations, the L2 cache, the memory controllers, and the various fixed function controllers such as the display controllers, PCIe bus controllers, Universal Video Decoder, and Video Codec Engine.

At the end of the day if AMD has done their homework GCN should significantly improve compute performance relative to VLIW4 while gaming performance should be just as good. Gaming shader operations will execute across the CUs in a much different manner than they did across VLIW, but they should do so at a similar speed. And for games that use compute shaders, they should directly benefit from the compute improvements. It’s by building out a GPU in this manner that AMD can make an architecture that’s significantly better at compute without sacrificing gaming performance, and this is why the resulting GCN architecture is balanced for both compute and graphics.

292 Comments

View All Comments

MadMan007 - Thursday, December 22, 2011 - link

More stuff missing on page 9:[AF filter test image] [download table]

Ryan Smith - Thursday, December 22, 2011 - link

Yep. Still working on it. Hold tightMadMan007 - Thursday, December 22, 2011 - link

Np, just not used to seeing incomplete articles publsihed on Anandtech that aren't clearly 'previews'...wasn't sure if you were aware of all the missing stuff.DoktorSleepless - Thursday, December 22, 2011 - link

Crysis won't be defeated until we're able to play at a full 60fps with 4x super sampling. It looks ugly without the foliage AA.Ryan Smith - Thursday, December 22, 2011 - link

I actually completely agree. That's even farther off than 1920 just with MSAA, but I'm looking forward to that day.chizow - Thursday, December 22, 2011 - link

Honestly Crysis may be defeated once Nvidia releases its driver-level FXAA injector option. Yes, FXAA can blur textures but it also does an amazing job at reducing jaggies on both geometry and transparencies at virtually no impact on performance.There's leaked driver versions (R295.xx) out that allow this option now, hopefully we get them officially soon as this will be a huge boon for games like Crysis or games that don't support traditional AA modes at all (GTA4).

Check out the results below:

http://www.hardocp.com/image.html?image=MTMyMjQ1Mz...

AnotherGuy - Thursday, December 22, 2011 - link

If nVidia released this card tomorrow they woulda priced it easily $600... The card succeeds in almost every aspect.... except maybe noise...chizow - Thursday, December 22, 2011 - link

Funny since both of Nvidia's previous flagship single-GPU cards, the GTX 480 and GTX 580, launched for $499 and were both the fastest single-GPU cards available at the time.I think Nvidia learned their lesson with the GTX 280, and similarly, I think AMD has learned their lesson as well with underpricing their HD 4870 and HD 5870. They've (finally) learned that in the brief period they hold the performance lead, they need to make the most of it, which is why we are seeing a $549 flagship card from them this time around.

8steve8 - Thursday, December 22, 2011 - link

waiting for amd's 28nm 7770.this card is overkill in power and money.

tipoo - Thursday, December 22, 2011 - link

Same, we're not going to tax these cards at the most common resolutions until new consoles are out, such is the blessing and curse of console ports.